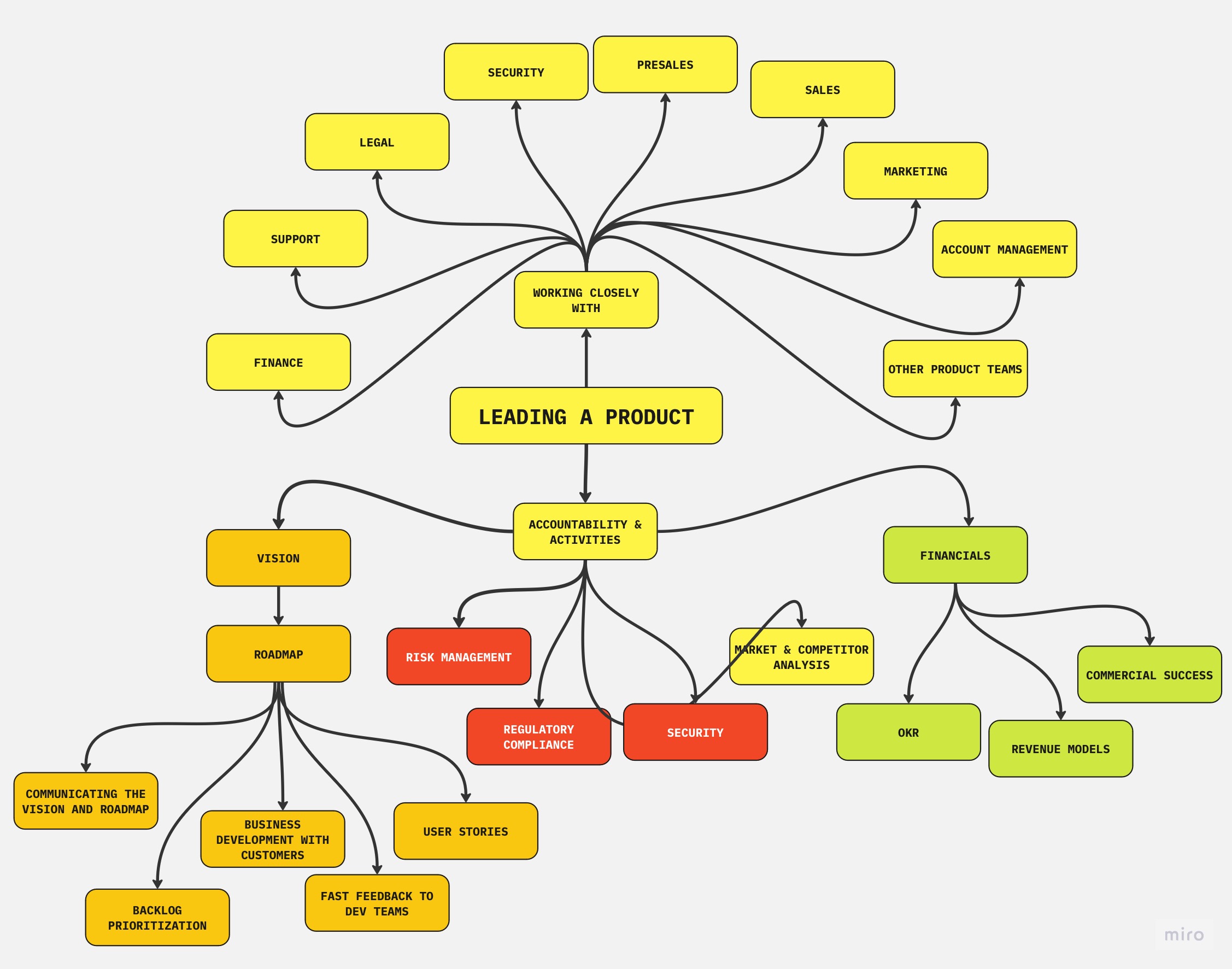

Myths and Mistakes About Product Owners

Once upon a time, there was only product manager. Then the concept of product owner came in to the picture. It puzzled many, because we were creating software products before and there were people leading products. However, it became impossible to keep up with the demand for increasing the frequency of impactful releases with sufficient quality while maintaining current roles and communication structures and tighter feedback loops. We can observe indirect impact of Kano Model on software products in terms of increased workload and expectations on product managers.Why Many Young Engineers Struggle in the First Years

Back in college, we had a professor whose exams contained some questions that people found to be notoriously difficult compared to others. To get a score higher than “good enough”, we had to solve questions that include problem combinations that we never came close during the lectures. I did not understand why he was doing that back then. What we had seen during the lectures, was enough to cover every piece of knowledge needed to solve those questions, but only few were able to solve all questions.Why Our Infra Integration Code Keeps Changing

Years back, we had a maven dependency that was part of a large project for many years. It was coming from a few Java versions back in the past (Those were the days when a new release of Java was a rare event to witness). But it was working just fine, no tests failing, nothing new needed. It didn’t make any stability or performance problem at all. It didn’t have any dependency that might need an update.A Better Analogy for Agile Organizations

No, this isn’t another docker blog post that surprisingly uses a container ship to explain stuff. This is about looking for a better analogy for agile organizations.

I usually have a problem with those cliché examples given to describe agility. We have already moved from thinking about the agility in the relatively narrow context of software teams and extended that to the whole organization to cover the whole chain. (This also follows the lean principle of optimizing the whole) So examples should resonate more with larger, more heterogeneous environments. Resilience, adaptation and survival should be possible to observe through the organization.

Numerous examples from real life is given to show difference and advantages of agile organizations. Introducing new concepts using examples that can be familiarized helps the story.

What examples do we often see trying to symbolize agility and rigidness ?

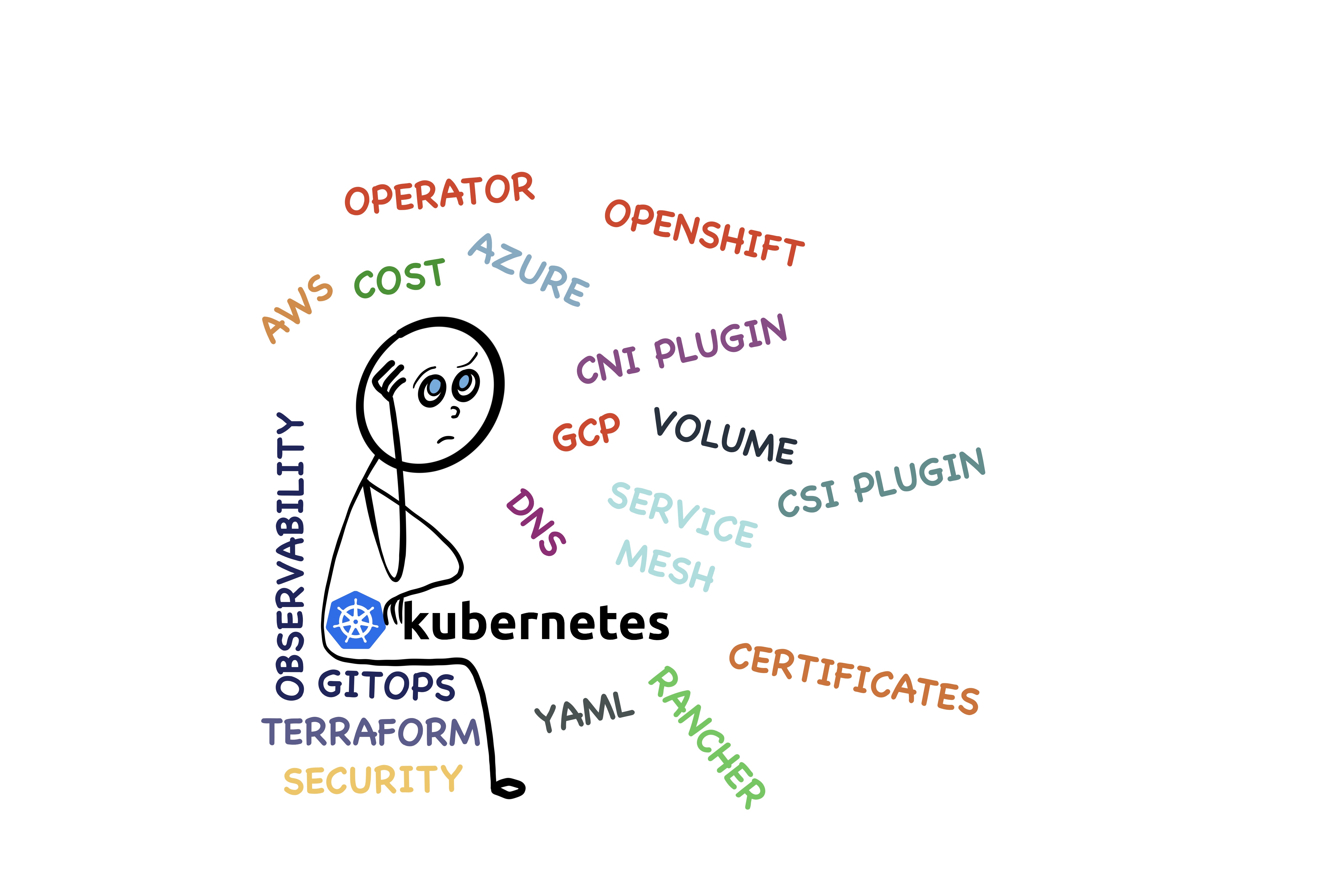

Do's and Dont's When Moving to Kubernetes

Looking at the certain parts of the software engineering discourse, it may look like kubernetes has already become the defacto deployment environment in the world, but that is hardly the case.

Still, majority of the work runs on virtual machines. Bare metal servers are still around to meet certain requirements. There are many reasons for those and some of them aren’t quite possible solve. So there are a lot of engineering teams around the world who have never worked with containers, let alone kubernetes.

In this post, we will visit some points (definitely not an exhaustive list) to consider in the early phases of kubernetes journey to get results easier. When executed carelessly, adopting kubernetes can become a painful, demoralizing problem and it can alienate both business and engineers.

Is Software Antifragile ?

Nassim Nicholas Taleb’s books are influential in many areas and across many disciplines. It can change how we view and interpret the world. In The Black Swan Theory and Why Agile Principles Fit Better, we went on an adventure trying to explain why creating software with agile principles fit better than other approaches in a world described by Taleb.

In this post we will visit Antifragile, and see if we can find some more lessons for software engineering.

Top 10 Anti-Patterns Using Jira

We went through a painful outage of Atlassian products. It was difficult for engineers on both sides.

The criticism on the social media about Jira often amazes me. While some of them are right on spot, there are some others that point towards not so optimal, sometimes plain wrong usage of Jira. In this two series post, I will list both antipatterns and best practices to use Jira effectively, from my experience.

Doing Things Right at the First Time..In Software Engineering

Over the last few years, I increasingly come across the phrase doing it right at the first time (DIRFT) in the context of various applications of software engineering(infrastructure, cloud, microservice design as well)

However, it causes damage to the efficiency and value delivery of software engineering when it is shoehorned to software engineering.

In the rest of this post, I’ll visit the dangers introduced with this approach.